In the race to release high-quality software at ever-faster speeds, testing struggles to keep up. Manual testing processes simply can’t scale at the pace of modern development. This mounting pressure makes the use of artificial intelligence (AI) in software testing solutions essential.

And that pressure will only increase. According to Gartner’s “Market Guide for AI-Augmented Software-Testing Tools,” 80% of enterprises will have made AI-augmented testing tools part of their software engineering toolchain by 2027, compared to just 10% in 2022.

Since we last wrote about using AI in software testing, the number of use cases has multiplied. AI brings game-changing capabilities that allow testing to match today’s digital velocity. The right AI strategies can optimize test automation, generate test data, predict defects, prioritize test cases, and more.

The Promise of AI in Software Testing

AI refers to machines mimicking human intelligence to analyze data, identify patterns, learn, reason, and make decisions. It includes techniques like machine learning, deep learning, computer vision, natural language processing, and neural networks.

When applied to software testing, AI can:

- Automate time-consuming manual processes

- Make testing comprehensive and consistent

- Focus testing efforts on high-risk areas

- Prevent defects early through predictive analytics

- Make informed decisions backed by data insights

- Scale test coverage as needed

This translates to improved efficiency, effectiveness, and quality across the software testing life cycle.

Techniques for AI in Software Testing

The software development life cycle (SDLC) has become an intricate ballet of tight deadlines, frequent releases, and near-constant updates. Testing serves as the ultimate gatekeeper for quality assurance, preventing potential system failures that could tarnish an organization’s reputation and compromise customer satisfaction.

Before we delve into some compelling use cases, here’s an overview of the AI techniques that are having a transformative impact on the realm of software testing.

- Machine Learning: Algorithms that can learn and improve from data without explicit programming. Crucial for test case prioritization, defect prediction, and result analysis.

- Deep Learning: Advanced neural networks that can recognize complex patterns. Used for image recognition and natural language processing.

- NLP: Processing and interpreting human language data. Valuable for generating test cases from requirements.

- Computer Vision: Analyzing and understanding visual data using AI algorithms. Useful for graphical user interface (GUI) testing and validation.

- Robotic Process Automation (RPA): Automating repetitive tasks through programmed “bots,” which can be helpful for test execution.

Use Cases for AI in Software Testing

The prevailing wisdom in IT today isn’t to merely test more, but to test more intelligently. And what could be smarter than integrating AI into your testing protocols? With the application of natural language processing (NLP), in particular, AI has the potential to revolutionize how we approach software testing.

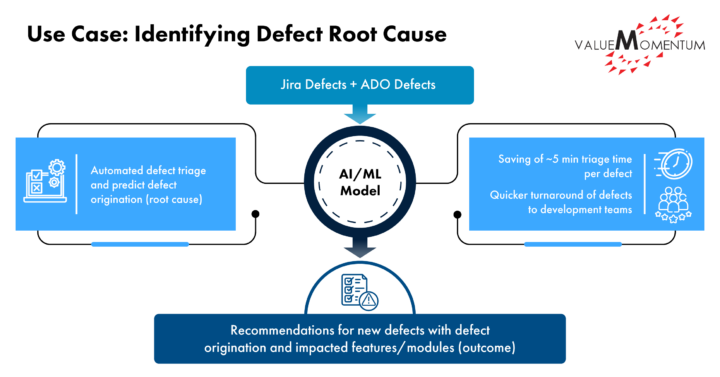

Identifying Defect Root Cause

It’s unfortunately common that a tester identifies a defect and assigns it to a dev team, which replies: “Sorry, not an issue with my application.” The tester then assigns it to another team, and the defect goes back and forth like a ping-pong ball.

Now, an AI-based model can go through the description of defects and learn and predict the root causes of new defects as the tester identifies and creates them. Based on the keywords used in defect description, the model can predict the right root cause application using historical data and learning. This saves significant time for the development and testing teams.

Diagram explaining defect root cause identification

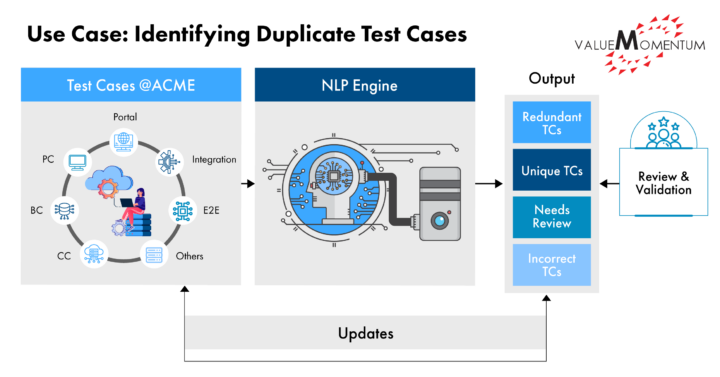

Identifying Duplicate Test Cases

Using an AI model based on NLP and feeding it data from test case descriptions, this model is able to predict unique test cases vs. duplicates with a probability score. In place of running test cases, a human reviewer can now focus on the result, confirm or change the recommendation, and update the test case repository. This info is then fed back to the AI model so that it gets better at predicting.

Diagram explaining how to identify duplicate test cases

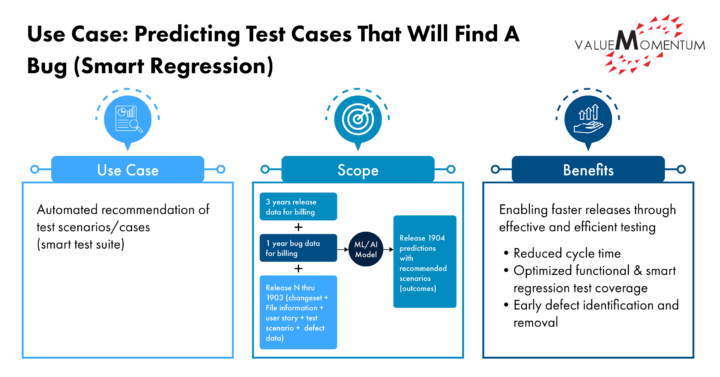

Predicting Test Cases that will Find a Bug

Using release, bug, and test case data, this AI model recommends which test cases to execute. Based on historical data, the machine identified that if code is checked in with changes to file 1, then file 2 has always been checked in. Then, in a subsequent code delivery, if file 1 is checked in and not file 2, AI will recommend to execute test cases related to file 2 to ensure the changes needed in file 2 are not accidentally left out by the developer. NLP along with Bayesian modeling is used here.

Diagram explaining how to predict which test case will most likely find a bug

Even More AI Use Cases in Software Testing

As in other disciplines, the number of use cases for AI in software testing is continuing to grow, but here are some of the highest-impact real-world applications today:

Detection/Analysis

- Anomaly Detection: AI can detect anomalies in application behavior by analyzing historical test data and identifying patterns or deviations from expected behavior. This is especially useful for finding unexpected issues in complex systems.

- Automated Root Cause Analysis: Identifying the root cause of defects is vital but difficult. AI can automate root cause analysis by applying algorithms to scan logs, events, error messages, and bug reports to detect patterns and correlate failures to their underlying root cause.

- Defect Prediction and Prevention: Leveraging techniques like deep learning, AI models can predict potentially defective modules by assessing past defects, code complexity, developer skills, and code changes. Testers can then focus more testing on high-risk areas to proactively prevent defects.

- Identifying duplicate test cases: Using an AI model based on NLP and feeding it data from test case descriptions, the model predicts unique test cases vs. duplicates with probability score. This info is then fed back to the AI model so that it gets better at predicting (see illustration above).

- Predictive Analysis: AI can predict potential areas of the software that are likely to have defects based on historical data, allowing testing efforts to be focused on high-risk areas.

- Risk-based Testing: AI algorithms can dynamically determine test priority and effort based on real-time risk indicators like code complexity, frequency of changes, defect history, and identified vulnerabilities. This allows dynamically optimizing testing to maximize risk coverage.

- Visual Testing: AI can perform visual testing by comparing screen captures of the application’s user interface (UI) before and after changes, identifying visual defects such as layout issues, missing elements, or UI glitches.

Execution/Monitoring

- Intelligent Test Automation: AI is powering a new generation of smart test automation tools. With AI, test automation scripts can be created faster, automated oracles can be generated, tests can self-heal, and more. This amplifies test automation productivity.

- Performance Testing: AI can simulate user traffic and analyze system performance under various conditions. It can help in identifying performance bottlenecks, scalability issues, and resource constraints.

- Predictive Test Prioritization: With limited time, determining which test cases to execute first is critical. AI algorithms analyze past defects, test case complexity, code coverage, code changes, and other factors to predict and recommend an optimal prioritized test sequence.

- Result Analysis and Reporting: Large test suites produce huge volumes of results. AI can automatically compile intelligent analysis reports by processing raw test results and identifying trends, outliers, and insights.

- Self-healing: Test automation through dynamic updates to object selectors by multiple indicators, such as location or anchor points, optical character recognition (OCR), semantic analysis and document object model (DOM) attributes.

- Test Execution and Monitoring: AI-powered bots or agents can execute tests continuously and monitor application behavior. They can automatically detect deviations from expected behavior and trigger alerts or generate reports when issues are identified.

Generation/Assistance

- Automated Test Case Generation: Manually writing test cases is tedious and time intensive. AI can automatically generate test cases — and automatically maintain them through “self-healing” — by analyzing requirements documents, user stories, specifications, and other artifacts through NLP. This accelerates the testing process and enables continuous test generation.

- Chatbots for Test Assistance: AI-driven chatbots can assist testers in answering queries, provide test-related information, and guide them through testing processes.

- Intelligent Test Data Generation: Creating good test data is complex. AI techniques like generative adversarial networks (GANs) can intelligently generate large and diverse sets of test data that cover different scenarios, schemas, and equivalence partitions. This results in more robust testing.

The Future of Software Testing with AI

As capabilities in AI software testing continue advancing, it will open even more intelligent testing use cases, such as: autonomously adapting tests to application changes; automated test environment provisioning; AI-generated assertions and oracles; real-time recommendations to improve testing; natural language test result analysis; predicting test coverage needs; and simulating user journeys for integration testing.

The possibilities are endless. Forward-thinking teams are already realizing improved QA velocity, efficiency, and quality by infusing AI into their testing processes.

Take your quality engineering and testing practices to the next level. Learn how ValueMomentum’s QualityLeap Services can help you get there.